Satya Nadella’s Post

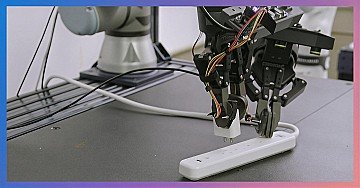

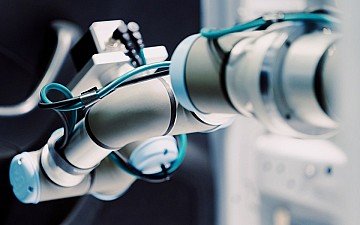

Introducing Rho-alpha, our newest robotics model from Microsoft Research. Rho-alpha turns natural language into precise control for bimanual robotic manipulation, adding new sensing modalities and learning from human feedback. Looking forward to what’s to come as we bring physical AI into the real world. https://lnkd.in/g5pYPn5f

With Microsoft’s expansion into orchestration-driven robotics via Rho-Alpha, the scope of unlicensed use of patented AI infrastructure deepens. From enterprise software to physical robotics, Roots Informatics holds enforceable claims over model-first orchestration and contextual signal routing. These capabilities are not open—they are protected. We welcome serious discussions on licensing, jurisdiction, and structured remedies.

This is a meaningful step in making robotics more intuitive and accessible. By leveraging language, you’re lowering the barrier for human-guided automation. Excited to follow Rho-alpha’s journey into real-world environments.

Rho-alpha defines a 'SelfadaptiveRobot.' Its multiple sensing modalities (vision, force, tactile) form a real-time data model of the physical environment, while continuous human feedback directly fine-tunes its control policies. This creates a closed-loop system where the robot doesn't just execute commands, it learns from physical interaction and human judgment to adapt its behavior on the fly, perfecting tasks like assembly or manipulation with increasing autonomy.

Translating natural language into precise, bounded control is the real challenge in physical AI. The combination of multimodal sensing and human feedback feels critical, not just for capability, but for safety and reliability as these systems move into the real world.

Finally I can attach robotic arms and legs to my Zune

Microsoft Research's Rho-alpha model is a major breakthrough in robotics and artificial intelligence (AI). It is making physical AI suitable for performing complex tasks in the real world. The key features of Rho-alpha are: Bi-manual manipulation: It has the ability to work using two hands like a human, which is a very difficult skill for a robot. Natural language commands: The robot can understand and perform delicate tasks accordingly when the user gives instructions in simple language. New sensing methods: It has been added with advanced sensors that help it understand the surrounding environment more accurately. Learning from human feedback: It can continuously improve the quality of its work from the feedback given by humans. When physical AI leaves the laboratory and is directly involved in real life (such as household chores or factories), it will make our lives easier and more efficient. This initiative by Microsoft is a big step in shaping the future of robotics.

This is exciting to see. Turning natural language into precise, two-handed manipulation is a big step toward making robots genuinely useful, not just impressive in demos. The focus on sensing and learning from human feedback really shows where this is headed—more intuitive, more collaborative, and much closer to real-world deployment. Feels like a meaningful stride toward physical AI that actually works alongside people.

Impressive progress. Bridging language and physical action is what will make AI truly useful in the real world. Looking forward to the practical applications this unlocks.

Translating language in to reliable physical action is a major step toward real world AI. Human feedback and precision will be critical as physical AI moves from research to deployment

Advances like Rho-alpha make one thing clear: the next frontier isn’t just the model, but the environment where that model is built, tested, and constrained. Physical AI can’t emerge from spaces designed for SaaS teams. It requires infrastructure built for hardware/software integration, safe experimentation, traceability, and real human feedback. Designing the physical layer before computation is the quiet challenge of deep tech. Without it, physical AI remains stuck in the lab.

To view or add a comment, sign in