Summary

AI decisioning automatically chooses the next-best action per customer within constraints. It executes actions (not just variants or predictions), requires incrementality testing beyond conversions, and should be validated in shadow mode before launch.

Artificial intelligence (AI) decisioning automates the selection of the next-best action for each customer, constrained by business rules, frequency caps, and real-time context. It selects:

- The right offer

- The right channel

- The right timing

- The right message

It predicts what a customer will do and helps determine the best action to take, then executes that action. This guide explains how AI decisioning works across the customer journey, how to measure it with incrementality testing, and how to implement it without costly production failures.

You’ll learn the difference between personalization and decision intelligence, the infrastructure required for real-time and batch decisioning, and the guardrails that keep AI systems compliant and trustworthy. By the end, you’ll have a clear roadmap for moving from static segmentation to autonomous, outcome-driven customer engagement.

A retention campaign sends a discount to a customer who was already logging in to renew. The personalization engine calls it a win because the customer converted. But you just gave away margin on someone who needed no intervention.

That’s personalization without decision intelligence.

AI decisioning is the automated selection of the optimal action (offer, channel, timing, message) for each customer, subject to eligibility rules, frequency caps, and business objectives. It predicts likely customer behavior and selects the best action to take based on business goals and constraints.

| Capability | Primary output | Scope | Success metric |

| Journey analytics | Reports and visualizations | Post-hoc, cross-channel | Insight generation |

| Personalization | Content variants | Single touchpoint | Conversion rate |

| AI decisioning | Next-best action | Cross-channel, real-time | Incremental lift |

What AI decisioning is not:

- A recommendation engine alone: Recommendations focus on products, while decisioning also weighs actions and constraints

- A reporting dashboard: Dashboards report past performance, while decisioning helps determine future actions

- A rules-only workflow: Rules handle known scenarios, while decisioning also optimizes across uncertain scenarios. Rules handle known, predefined conditions; decisioning discovers patterns and optimizes across conditions a human didn’t explicitly anticipate, including interactions between variables like channel preference, time sensitivity, and discount response.

How AI decisioning works across the customer journey

Most “AI-powered” campaigns still rely on static segments refreshed overnight. By the time the decision reaches the customer, it’s already stale.

Real AI decisioning follows a continuous lifecycle:

- Signal capture: Behavioral events, transactional data, consent flags

- Feature computation: Real-time aggregations and derived attributes

- Model inference: Propensity, uplift, or ranking scores

- Policy evaluation: Eligibility rules, frequency caps, exclusion lists

- Action selection: Channel, offer, timing

- Outcome capture: Feedback logged to improve future decisions

A few terms you’ll encounter:

- Feature store: A centralized repository serving data to models for training and inference

- Policy engine: The component enforcing business rules and constraints

- Decision API: The interface applications call to request the next-best action

- Feedback loop: The mechanism feeding outcome data back to improve accuracy

What data foundation and real-time signals does AI decisioning need?

If identity resolution runs overnight, your real-time decisions are hours behind.

You need these categories of signals:

- Behavioral: Page views, clicks, cart events

- Transactional: Purchases, returns, subscriptions

- Contextual: Device, location, time

- Consent: Opt-in status, preference center flags

Unified customer profiles are a prerequisite. Without them, the decision engine can’t see the full context; if you want to see what “unified + real-time” looks like in practice across channels, book a demo, and we’ll walk through the end-to-end decision flow.

How does decision intelligence optimize action selection?

Targeting customers with the highest propensity to convert often wastes budget on people who would have converted anyway.

Uplift modeling estimates the incremental impact of an action, not just the likelihood of an outcome. A customer with high propensity but near-zero uplift shouldn’t receive your retention offer. They were going to renew at full price.

Insider One’s Sirius AI™ applies this principle directly: Discount Affinity Modeling identifies which users need a discount to convert versus those who would have purchased at full price, protecting margin. Churn Risk Scoring flags users showing early disengagement signals before they unsubscribe, so retention offers reach the users where intervention actually changes the outcome.

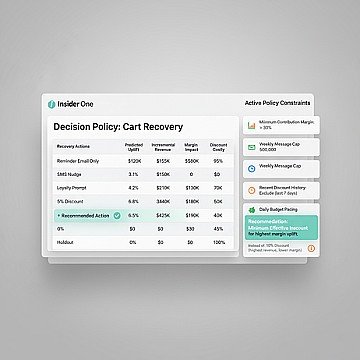

The decision framework:

- Define the objective: Revenue, retention, engagement

- Score candidates: Propensity, uplift, or hybrid

- Apply constraints: Eligibility, frequency caps, channel capacity, margin floors

- Select the action: Maximize the objective within constraints

Multi-armed bandits balance exploration (testing new actions) with exploitation (using proven ones). This keeps the system learning without sacrificing too much performance.

How does autonomous execution support closed-loop learning?

If outcomes aren’t captured with the same granularity as decisions, the model learns nothing.

The feedback loop:

- Decision log: Who, what, when, why

- Outcome capture: Conversion, revenue, engagement within the attribution window

- Performance aggregation: Summarize results to evaluate effectiveness

- Drift detection: Identify when input data or behavior shifts

- Model refresh: Retrain or update policies based on new data

Immediate outcomes like clicks and opens can be measured within hours. Delayed outcomes like purchases and renewals may require attribution windows of 7–30 days depending on the product category and sales cycle.

What to monitor:

- Calibration: When the model predicts a 30% purchase probability, do ~30% of those users actually purchase?

- Drift: Are customer behaviors or data patterns changing in ways the model wasn’t trained on?

- Latency: Are decisions returned fast enough for each channel’s requirements?

- Bias: Are certain customer segments consistently receiving more or fewer actions than expected?

When should you use real-time vs. batch decisioning?

Channel latency requirements drive the choice.

| Channel | Latency requirement | Recommended pattern |

| On-site/in-app | Sub-200ms | Real-time |

| Push notifications | Sub-5 seconds | Real-time |

| Minutes to hours | Batch or hybrid | |

| Paid media | Minutes to hours | Batch |

A hybrid pattern precomputes scores in batch, then applies real-time constraints (eligibility, frequency) at decision time.

Real-time adds infrastructure complexity. Teams with limited engineering capacity should start with batch and add real-time only for high-value, latency-sensitive channels; if you’re mapping what to run real-time vs. batch and what it takes to operationalize it, use the product demo hub to explore the patterns before you commit engineering cycles.

What guardrails and governance keep AI decisioning trustworthy?

An AI system sends a discount to a customer who just filed a complaint. Or exceeds the daily message cap. Or targets a segment excluded by legal.

These failures happen when guardrails exist in policy documents but aren’t encoded in the decision engine.

Guardrail categories:

- Eligibility rules: Who can receive an action (exclude customers with active support tickets)

- Frequency caps: Max messages per day, week, or channel

- Content safety: Brand guidelines, legal restrictions

- Fairness checks: Segment-level exposure audits

- Kill switch: Manual override to halt a campaign instantly

A proper decision log records:

- Customer ID

- Decision timestamp

- Action selected

- Model version

- Constraints applied

- Outcome

Before activation, test guardrails with synthetic edge cases. Verify exclusion lists are active. Confirm frequency caps trigger correctly across channels.

How to measure AI decisioning with incrementality testing

High conversion rates on AI-targeted campaigns often reflect selection bias. The customers who received the offer were already likely to convert, which is why incrementality testing is gaining traction as marketers work to separate real lift from noise.

The measurement hierarchy:

- Delivery metrics: Sent, delivered, opened (necessary but insufficient)

- Response metrics: Clicks, conversions (confounded by selection)

- Incrementality metrics: Causal lift vs. holdout (the true measure)

Holdout design: randomly withhold a portion of the eligible population from the AI-selected action and compare outcomes. If the treatment group converts at a higher rate than the holdout, the difference is the incremental lift attributable to the decision.

Pitfalls to avoid:

- Contamination: Holdout customers receive the action through another channel

- Insufficient size: The holdout group is too small for statistical significance

- Short windows: Attribution windows too brief for delayed outcomes like renewals

Holdouts sacrifice short-term revenue for measurement accuracy. Teams under pressure to hit quarterly targets often skip them, then lose the ability to prove return on investment (ROI); if you want a clean template for holdouts, attribution windows, and contamination controls, book a demo and we’ll show how to instrument incrementality without slowing delivery.

What use cases does AI decisioning support across the customer journey?

Each use case follows a consistent structure: signal, decision, channel, constraint, key performance indicator (KPI).

How do cross-sell and upsell programs benefit from constraint-aware recommendations?

Standard product recommendation engines optimize for click probability, not incremental revenue or margin. A cross-sell system without constraints recommends a low-margin accessory that cannibalizes a higher-margin bundle.

- Signal: Purchase completed, cart contents, browse history

- Scoring: Product affinity × uplift × margin

- Constraints: Inventory availability, margin floor, exclusion of recently purchased categories

- Action: Personalized cross-sell in post-purchase email or on-site overlay

KPIs: attach rate lift, incremental revenue per order, margin impact.

For example, jewellery brand Chow Sang Sang used Insider One’s Smart Recommender with built-in A/B testing and measured a 6.69% uplift in average order value and 9.69% uplift in incremental revenue.

Insider One’s Smart Recommender addresses this directly: recommendations can be filtered by inventory availability, margin thresholds, and custom attributes (e.g., ‘compatible_device’), and every recommendation strategy supports built-in A/B testing to measure incremental lift against a control group.

How does AI decisioning improve churn prediction and retention offers?

Targeting all high-churn-risk customers with a discount wastes budget on customers who would have churned regardless and customers who would have stayed without intervention.

- Signal: Engagement decline, support tickets, contract approaching renewal

- Scoring: Churn propensity × uplift from offer

- Constraints: Offer budget, eligibility rules, frequency caps

- Action: Personalized retention offer via preferred channel

Withhold the offer from a random subset of high-uplift customers and compare renewal rates. Aggressive discounting improves short-term retention but erodes margin. Decisioning systems should optimize for net value, not just renewal count.

Insider One automates this workflow end to end: Sirius AI™’s Churn Risk Scoring identifies users showing early disengagement, Architect triggers a personalized win-back journey on the user’s highest-engagement channel, and the built-in holdout framework measures incremental retention lift.

How does AI decisioning support on-site and in-app personalization at very low latency?

On-site decisions must complete in sub-200ms to avoid degrading page load. Insider One’s Web Suite and Smart Recommender serve personalized content, product recommendations, banners, overlays, within this latency threshold, with decisions informed by the user’s real-time session context and unified profile.

The decision application programming interface (API) returns a ranked list of content IDs. The front-end renders the content.

KPIs: click-through rate (CTR) on personalized slots, conversion rate, revenue per session.

On-site personalization is harder to measure incrementally because holdouts degrade user experience. Consider segment-level A/B tests instead.

Should you build or buy AI decisioning?

Teams with strong data engineering can build custom decisioning on top of a customer data platform (CDP) and a machine learning (ML) platform. Teams prioritizing speed-to-value should buy an integrated solution.

| Pattern | Pros | Cons |

| Build | Full control, custom optimization | Requires ML operations expertise, multi-month implementation |

| Buy | Faster time-to-value, pre-built guardrails | Less flexibility for custom models |