AI decisioning is the automation layer that determines which message to send, on which channel, at what time, for each customer in real time. It replaces static rules with models that optimize for business outcomes like margin, incrementality, and long-term engagement. Many teams run A/B tests and orchestrate journeys, but still see diminishing returns because they are running decisions by segment, not by individual customer.

This guide explains how AI decisioning works across data, intelligence, and execution layers, how it differs from propensity scoring and rules-based next best action, and how to measure true ROI through incrementality testing. You’ll learn what it takes to operationalize decisioning at scale, avoid black box outcomes, and drive measurable revenue lift across omnichannel marketing.

What are the key takeaways?

AI decisioning is the automation layer that selects the right message, channel, and timing for each customer in real time, replacing static rules with models that optimize for business outcomes.

- Decisioning optimizes for business constraints like margin and budget, not just customer preference

- Operationalizing it requires a unified data layer, a low-latency decision engine, and a feedback loop

- True ROI comes from incrementality testing with holdout groups, not before-and-after comparisons

What is AI decisioning in omnichannel marketing?

A customer receives a discount on an item they would have bought at full price as the marketing platform was trying to optimize for click-through, not business margin. That’s the gap AI decisioning will close.

AI decisioning is the real-time selection of which action to take, on which channel, at what time, for a specific customer, subject to business constraints. It’s distinct from personalization, which adapts content, and orchestration, which sequences workflows. Think of it as the decision tuple: who, when, channel, action, objective, and constraints.

Where does your organization stand? Here’s a quick maturity taxonomy:

- Rules-based: Static if/then logic with no learning

- Propensity models: Predict likelihood of response but ignore causality

- Uplift models: Estimate incremental impact of treatment vs. no treatment

- Contextual bandits: Balance exploration and exploitation in real time

Many teams operate between rules-based systems and propensity models. The jump to uplift and bandits is where decisioning starts to pay off.

Why does AI decisioning change omnichannel outcomes?

If your team already runs A/B tests and uses Architect, Insider One’s customer journey orchestration solution, but still sees diminishing returns, the bottleneck might be the level of decisions to be made.

Here’s what changes when decisioning is done well:

- Conversion lift: Decisioning selects offers based on predicted incremental response, avoiding wasted impressions on customers who would convert anyway

- Margin protection: Constraints prevent discount offers to high-intent buyers, preserving margin without manual exclusion rules

- Channel efficiency: Cost-weighted arbitration routes messages to the lowest-cost channel that meets engagement thresholds

- Fatigue reduction: Contact policies enforced at decision time prevent over-messaging, improving long-term engagement

How does AI decisioning work across data, intelligence, and execution?

If your customer data platform (CDP) and Architect are already integrated, why do customers still receive irrelevant offers? The missing layer is the decision engine that sits between data and activation.

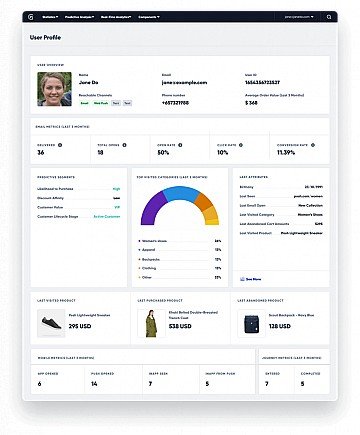

The data layer consists of unified profiles, event streams, and a feature store. This layer pre-computes signals like recency, frequency, and predicted lifetime value for low-latency retrieval.

The decision engine receives a decision request containing the customer ID, context, and available actions. It applies eligibility rules, scores candidates against the objective function, enforces constraints, and returns the winning action with reason codes.

The orchestration layer executes the selected action on the designated channel at the specified time. It also manages fallback logic if the primary channel fails.

The feedback loop captures outcomes like opens, clicks, conversions, and revenue. This data feeds back to retrain models and update feature values.

| Layer | Responsibility | Latency target |

| Data | Unified profiles, feature store | Real-time or near-real-time refresh |

| Decision engine | Scoring, arbitration, constraint enforcement | Low-latency for real-time channels |

| Orchestration | Execution, channel routing, fallback | Varies by channel |

| Feedback | Outcome capture, model retraining | Near-real-time for bandits; periodic for batch |

What data readiness does AI decisioning require?

A decision engine that receives stale purchase data will score offers incorrectly. Irrelevant recommendations follow. The issue is rarely data volume; it’s data freshness and identity confidence.

Three dimensions matter:

- Freshness: For real-time channels, behavioral events must propagate to the feature store quickly

- Completeness: Missing attributes limit channel eligibility; the decision engine must handle partial profiles gracefully

- Identity confidence: When identity resolution produces a probabilistic match, the decision engine should factor uncertainty into action selection

How do decision intelligence and constraint enforcement work?

Teams that deploy propensity models might fall into the trap of high response rates, with the reality that they’ve been granting discounts to customers who would have converted anyway and with the actual outcome of wasted budget.

| Use case | Recommended model | Why |

| High-volume, low-stakes | Contextual bandits | Balances exploration and exploitation without holdout groups |

| High-stakes, measurable outcomes | Uplift models | Estimates incremental impact of treatment vs. no treatment, avoiding treatment of “sure things” |

| Cold-start or sparse data | Rules + propensity fallback | Propensity provides a baseline when uplift data is insufficient |

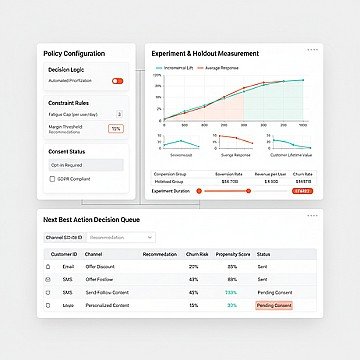

Constraints come in three types:

- Hard constraints: Must be satisfied (regulatory opt-out, inventory availability)

- Soft constraints: Penalized but not forbidden (contact frequency caps, budget limits)

- Fairness constraints: Ensure equitable treatment across customer segments

The decision engine evaluates all eligible actions, scores them against the objective function, applies constraints, and returns the highest-scoring action that passes all hard constraints.

AI decisioning vs A/B testing vs next best action

Some teams run A/B tests on email subject lines and think it is already “optimized by AI” but campaign performance has plateaued. This is because A/B testing only optimizes within a single touchpoint while AI decisioning optimizes across the entire action space.

| Method | What it optimizes | Data requirement | When to use |

| A/B testing | Single variant within one touchpoint | Low | Early-stage experimentation |

| Next best action (rules-based) | Action selection via static logic | Medium | Stable use cases with well-understood behavior |

| AI decisioning (model-driven) | Action, channel, and timing jointly | High | High decision complexity, large action space |

Teams often start with A/B testing, move to rules-based next best action, and then adopt model-driven decisioning as the action space grows. If you want to see what that jump looks like in a real stack, book a demo and we’ll walk through how decisioning replaces rule sprawl with constraint-aware automation.

Each approach has its own limitations:

- A/B testing: Simpson’s paradox when segment composition shifts during the test

- Rules-based: Rule proliferation leads to conflicts and maintenance burden

- Model-driven: Delayed feedback degrades model accuracy if not handled explicitly

How to avoid black box outcomes in AI decisioning

Some people assume that using AI means accepting opaque decisions, but this is not accurate with explainability built in the design.

Three operational patterns make decisioning transparent:

- Decision logs: Every decision request and response should be logged with reason codes explaining why the winning action was selected

- Consent gating: The decision engine should check consent status at decision time, not rely on upstream filters that may be stale

- Bias monitoring: Track outcome distributions across customer segments and flag anomalies

A sample decision log schema looks like the following:

| Field | Description |

| decision_id | Unique identifier for the decision request |

| customer_id | Unified profile ID |

| timestamp | Decision time (Coordinated Universal Time, UTC) |

| eligible_actions | List of actions that passed eligibility rules |

| scores | Score for each eligible action |

| winning_action | Selected action |

| reason_codes | Explanation of why this action won |

If you’re pressure-testing AI for governance, start with the mechanics, reason codes, logs, and controls, and explore the product demo hub to see how explainability shows up in the workflow.

Which omnichannel AI decisioning use cases drive revenue?

A customer abandons a cart containing a high-margin item. The legacy approach sends a discount email later. The decisioning approach evaluates whether a discount is necessary based on predicted purchase probability without intervention, selects the optimal channel, and schedules send time based on historical open patterns.

How does AI decisioning improve retention and churn prevention?

Targeting all customers with high churn propensity scores with a retention offer sounds logical. But some of those customers will churn regardless of intervention. Others will stay regardless. Uplift targeting focuses on the “persuadables” where intervention changes the outcome.

| Segment | Churn propensity | Uplift estimate | Recommended action |

| Sure things | Low | Low | No intervention |

| Persuadables | Medium-High | High | Targeted retention offer |

| Lost causes | High | Low | No intervention or low-cost win-back |

Measurement requires a holdout group to estimate incremental retention rate.

How does AI decisioning optimize cross-channel offers and timing?

The marketing team has an SMS budget and a contact frequency cap per customer. Without constraint-aware decisioning, the system exhausts the SMS budget on the first eligible customers. Later customers receive only email, regardless of their channel preferences.

Cost-weighted arbitration solves this. Each channel has a cost and an expected engagement rate. The decision engine selects the channel that maximizes expected value minus cost, subject to budget and frequency constraints.

| Channel | Cost per message | Expected engagement | Expected value | Decision |

| SMS | Higher | Higher | Higher | Selected |

| Push | Lower | Moderate | Moderate | Fallback |

| Moderate | Lower | Lower | Fallback |

If cart abandonment, churn, and channel arbitration are already on your roadmap, book a demo to map your highest-impact use cases to the actions, constraints, and channels you can operationalize first.

How to measure ROI from AI decisioning

If your team measures decisioning success by comparing campaign conversion rates before and after launch, you’re measuring correlation. External factors like seasonality, promotions, and product changes confound the comparison.

Three approaches isolate causal impact:

- Holdout groups: A randomly selected subset of customers who receive no AI-driven decisions serve as a control

- Geo-experiments: When customer-level randomization is impractical, randomize at the geographic level

- Switchback designs: Alternate between treatment and control periods to reduce time-based confounding

| Use case | Recommended design | Why |

| High-volume, low-stakes | Customer-level holdout | Sufficient sample size for statistical power |

| Low-volume, high-stakes | Geo-experiment | Reduces variance from individual customer behavior |

| Real-time decisioning with fast feedback | Switchback | Captures time-varying effects |

Interference can occur when customers interact across channels. A treated customer shares a referral code with a control customer. Monitor for contamination.

How do you implement AI decisioning?

Teams with a mature CDP and existing journey orchestration can operationalize basic AI decisioning in a matter of weeks. Teams starting from fragmented data should expect longer.

- Define objectives and guardrails: Specify the business objective and catalog constraints

- Audit data readiness: Confirm unified profiles, event streams, and outcome labels are available

- Build the action catalog: Enumerate all possible actions with eligibility rules for each

- Select and train models: Choose model type based on use case; train on historical data

- Integrate the decision engine: Connect to the feature store and orchestration layer

- Run in shadow mode: Log decisions without executing them

- Validate and