Summary

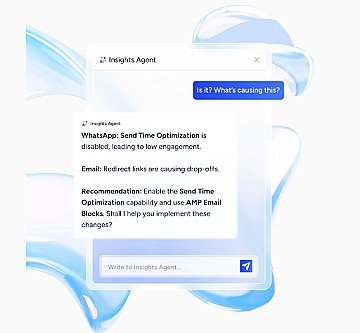

AI agents for cross-channel engagement are autonomous systems that unify customer data, choose the best channel and timing for each user, and deliver personalized messages without manual intervention. They act on live behavioral signals, unlike chatbots that follow scripts or automation tools that run on schedules. Real-time arbitration prevents over-messaging by scoring channels and enforcing frequency caps before every send, while most failed deployments stem from poor data readiness rather than model quality, making identity resolution critical before launch.

Rule-based marketing automation can’t keep up when customers switch from browsing on mobile to abandoning a cart on desktop, then checking email later. Batch segmentation refreshes on a delayed cadence. Static journeys break the moment intent shifts. Artificial intelligence (AI) agents for cross-channel engagement solve this by making real-time, per-user decisions about which channel to use, what message to send, and when to act, without waiting for a marketer to build another workflow.

This guide explains what AI agents are, how they differ from chatbots and marketing automation, and why they matter for teams managing high-volume, multi-channel engagement. You’ll learn how agents arbitrate across channels, enforce frequency caps, and close the loop on learning without manual split (A/B) tests. We’ll cover high-impact use cases like cart recovery and lead qualification, walk through an implementation playbook that prioritizes data readiness over model complexity, and show you how to measure incremental lift with holdout testing. By the end, you’ll know whether to build or buy, how to model total cost of ownership, and what success looks like when agents run your cross-channel orchestration.

You probably already use chatbots for scripted responses and marketing automation for scheduled campaigns. Neither decides autonomously which channel, message, and timing fits a specific user at a specific moment. That’s what an AI agent does.

An AI agent is a system with core capabilities:

- Autonomy: Initiates actions based on data signals without requiring a human trigger

- Memory: Retains context across sessions and channels, remembering a web visit when sending an email

- Tool access: Calls application programming interfaces (APIs), queries a customer data platform (CDP), and writes data back to a customer relationship management (CRM) system

- Multi-channel execution: Sends or suppresses messages across short message service (SMS), email, WhatsApp, push, and web in a single decision loop

| Feature | AI Agent | Chatbot | Marketing Automation |

| Trigger type | Event-driven | User-initiated | Scheduled |

| Decision scope | Real-time per-user | Scripted trees | Segment-level rules |

| Learning loop | Continuous | None | Batch A/B testing |

“Agentic AI” refers to this autonomous, tool-calling architecture. It differs from generative AI, which creates content, and predictive AI, which scores likelihoods. When evaluating platforms, confirm whether a vendor’s “AI agent” actually executes decisions or merely suggests them.

Why AI agents for cross-channel engagement matter.

Rule-based journeys break when customers switch channels mid-session. Batch segmentation can’t react to intent signals that expire in minutes.

Agents solve these constraints by moving decision-making to the edge of the interaction:

- Arbitration across channels: The agent scores each channel per user and picks one, reducing duplicate sends and channel fatigue

- Closed-loop learning: Every send, open, and conversion feeds back into the model, so performance compounds without manual A/B test cycles

- Consent-aware suppression: The agent checks opt-in status per channel before acting, lowering compliance risk

- Latency compression: Decisions happen in short windows, so a cart abandonment message reaches the user while intent is still warm. Beyond speed, agents also optimize when to send — Send Time Optimization uses individual engagement patterns to pick the moment each user is most likely to act

If your team waits for periodic segment refreshes, agents collapse that delay to real-time decision-making.

How AI agents coordinate across channels in real-time.

A user adds an item to cart on mobile, then closes the app. Within seconds, the agent must decide among these options:

- Send a push notification

- Send an SMS

- Send an email

- Send nothing

That decision involves data retrieval, scoring, suppression checks, and action dispatch.

Select the next best action per user and channel.

Insider One’s Sirius AI™ includes predictive models such as Likelihood to Click and Likelihood to Unsubscribe, which feed directly into the agent’s channel-scoring logic to maximize engagement while minimizing opt-outs.

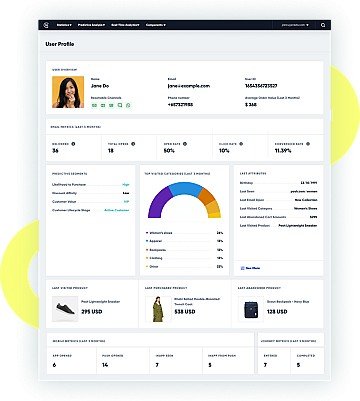

The agent retrieves the user’s unified profile: recent behavior, channel preferences, consent flags. It scores each eligible channel based on recency of engagement, historical conversion rates, and current context like time of day or device type.

The output is a ranked list. The agent picks the top option that passes all suppression rules.

Teams sometimes assume “next best channel” means “most engaged channel.” But an agent should also factor message type. A price-drop alert performs better on push. A detailed product recommendation suits email.

Conflict resolution and global frequency caps.

To prevent over-messaging, the agent enforces global caps, limiting a user to a limited number of marketing messages within a given period across all channels. It also resolves conflicts when multiple journeys target the same user simultaneously.

The agent prioritizes by business value. A high-intent cart abandonment message outranks a generic promotion.

Siloed journey builders lack this central arbitration layer. Most marketing clouds bolt journey orchestration onto separately acquired components, a CDP here, an email tool there, a decisioning engine elsewhere. This creates latency and data inconsistency. A natively unified platform eliminates these integration seams, which is why central arbitration should be a key evaluation criterion.

Without unified orchestration, two teams can unknowingly message the same user within minutes, so if you want to see what real-time arbitration plus global caps looks like in a live stack, book a demo.

High-impact use cases for cross-channel AI agents.

You can’t deploy agents everywhere at once. Pick use cases with high-frequency triggers, clear success metrics, and multi-channel handoff potential.

Recover abandoned carts and bookings.

The trigger is a cart or booking abandonment event, with nearly 70% of carts abandoned on average. The agent decides which channel to use, how soon to send, and whether to include a discount.

- Trigger: Cart abandoned with value above threshold

- Decision: Check last-engaged channel, consent status, time since last message

- Action: Send personalized reminder on highest-scoring channel

- Outcome: Measure incremental revenue vs. holdout group

Agents outperform static drip sequences because they adapt timing and channel per user. For B2B teams with longer sales cycles, the same agent architecture applies to lead nurture: detecting buying signals like pricing page visits or multiple stakeholder logins, then triggering personalized outreach on the highest-engagement channel. Browse the product demo hub to see how different agent patterns map to real journeys.

Drive conversational commerce with a Shopping Agent

Rather than passive product pages, a Shopping Agent engages users in real-time dialogue — asking about preferences, curating product bundles, and answering FAQs to build purchase confidence. The agent pulls from product catalogs, user history, and inventory data to recommend relevant items. This use case works especially well for categories where buyers need guidance: fashion, electronics, home goods. Measure success through average order value lift and conversion rate from agent-assisted sessions vs. unassisted. For example, In travel, this extends to abandoned bookings — flight or hotel searches where the agent can re-engage with updated pricing or availability.

Qualify leads and book appointments.

Agents qualify inbound leads through conversational interactions on chat or WhatsApp. They score responses and route high-intent leads to sales or book a meeting directly.

Fully autonomous booking works well for standardized offerings like demos. For complex or custom needs, define escalation criteria so the agent hands off gracefully. For example, In financial services, the agent can pre-qualify loan applicants by collecting income and credit information via WhatsApp before routing to an advisor.

Deflect tickets and resolve support queries.

Support agents handle FAQ resolution, order status checks, and routine service requests across chat, WhatsApp, and SMS. Deflection rate, tickets resolved without human handoff, is the primary metric.

Support agents require access to knowledge bases, order systems, and clear escalation rules. Audit integration readiness before launch.

Trust, safety, and governance for AI agents.

Enterprise teams won’t deploy agents without clear answers on hallucination risk, data handling, and human oversight.

Reduce hallucination risk.

Hallucinations occur when a model generates plausible but false information. Ground agent responses in retrieved data, product catalogs, knowledge bases, CRM fields, rather than open-ended generation. Agents should surface “I don’t know” responses when retrieval fails.

Tighter grounding reduces creativity. Support agents need high accuracy. Shopping agents can tolerate more exploratory responses.

Enforce knowledge boundaries.

Agents should have explicit topic boundaries: don’t discuss competitor products, don’t provide medical advice. Enforce these via prompt instructions, retrieval filters, and post-generation classifiers.

- Define allowed and prohibited topics

- Configure retrieval to exclude out-of-scope content

- Add a classifier to flag boundary violations before sending

Route with human-in-the-loop.

The agent should hand off to a human for low-confidence responses, high-stakes requests like account cancellation, or explicit user requests for a live agent. The handoff must preserve conversation context.

Teams sometimes set escalation thresholds too low, flooding human queues. Start with a higher threshold and tune down based on customer satisfaction scores. If you’re testing governance before rollout, book a demo to see the controls in action.

Implementation playbook for cross-channel AI agents.

Most failed agent deployments trace back to data readiness, not model quality. Teams that skip identity resolution end up with agents that message the wrong users.

Define success metrics and guardrails.

Define a primary metric, such as incremental revenue from cart recovery. Define a guardrail metric, such as ensuring unsubscribe rate doesn’t increase.

Can you measure this metric today with a holdout group? If not, instrumentation comes before agent deployment.

Unify identities and data sources.

The agent needs a unified customer profile to make cross-channel decisions. This requires identity resolution, linking email, phone, and device IDs to a single profile, and real-time event streaming from web, app, and transactional systems.

Teams underestimate the time to clean up duplicate profiles. If identity resolution is incomplete, the agent treats the same user as multiple people.

Once profiles are unified, AI-powered predictive segments, such as likelihood to purchase or churn, enable the agent to prioritize high-value users automatically.

Map a clean CRM data handoff.

When the agent qualifies a lead or resolves a ticket, that outcome must sync to CRM or support systems. Without write-backs, sales and support teams lose visibility.

- Confirm CRM API supports real-time writes

- Define which agent actions trigger a write-back

- Test round-trip latency from agent action to CRM update

Pilot high-value journeys and measure incremental lift.

Start with a single journey. Run a holdout test where a subset of users receive no agent intervention. Measure incremental lift by subtracting holdout group revenue from agent group revenue.

If lift is positive and guardrail metrics hold, add another journey. If lift is flat, diagnose data quality or channel selection before scaling.

Choose build vs buy for agentic orchestration.

| Factor | Build | Buy |

| Time to first pilot | Longer | Shorter |

| Engineering headcount | High | Low |

| Customization depth | Unlimited | Platform-constrained |

| Maintenance burden | Ongoing | Vendor-managed |

If the team lacks dedicated machine learning (ML) engineering, building will stall after the prototype. Use the product demo hub to compare what you can ship fast versus what you’d have to own forever.

Pricing, return on investment (ROI), and scale for cross-channel AI agents.

How do you justify this investment to finance? Model total cost, not just license fees. Measure incremental impact, not just campaign metrics.

Model total cost beyond licenses.

- License or usage fees: Per-message, per-user, or flat subscription

- Integration and setup: Engineering time to connect data sources and CRM

- Ongoing maintenance: Prompt tuning, model updates, governance reviews

- Compute and API costs: Token costs scale with volume if using external large language model (LLM) providers

Request a total cost of ownership estimate from vendors. If a vendor can’t provide one, that’s a red flag.

What to look for in vendor evaluations:

- Does the agent execute or just recommend?

- Is the CDP native or integrated via third-party connector?

- Does the platform support global frequency caps across all channels natively?

- Can you run holdout tests without engineering support?